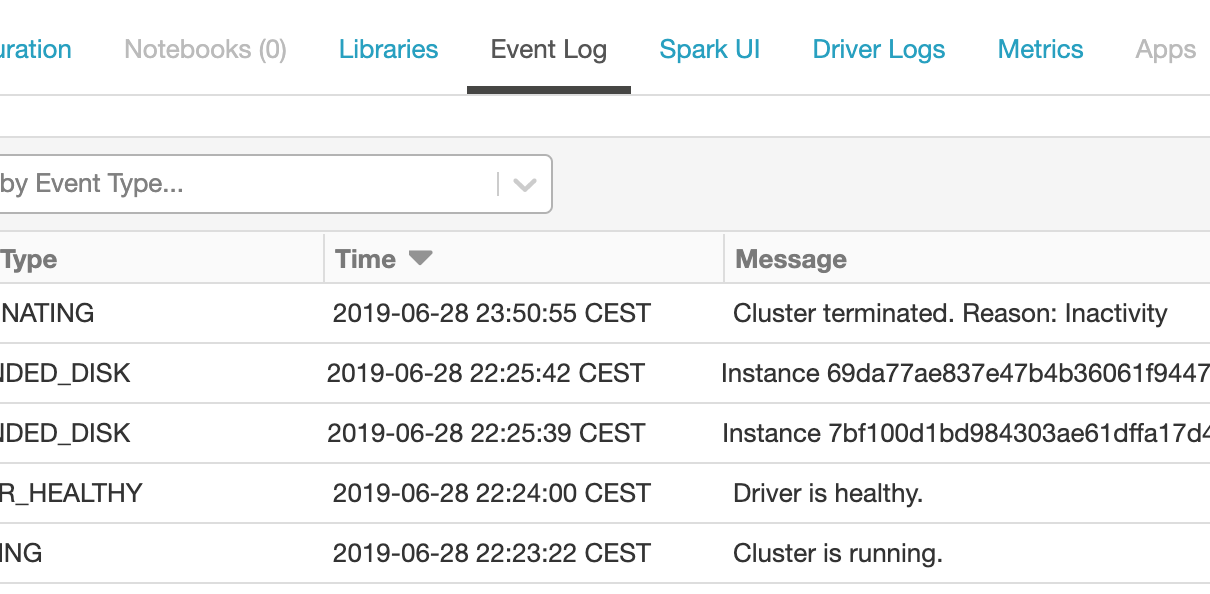

For running analytics and alerts off Azure Databricks events, best practice is to process cluster logs using cluster log delivery and set up the Spark monitoring library to ingest events into Azure Log Analytics. However, in some cases it might be sufficient to set up a lightweight event ingestion pipeline that pushes events from the […]

Tag: monitor

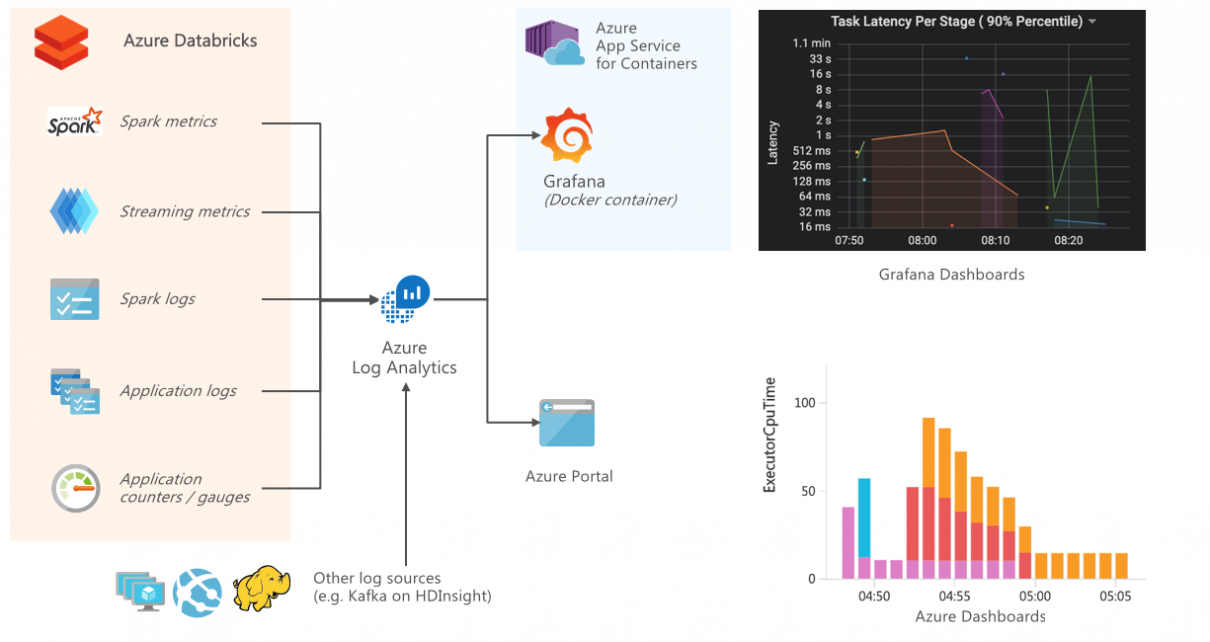

Monitoring and Logging in Azure Databricks with Azure Log Analytics and Grafana

Connecting Azure Databricks with Log Analytics allows monitoring and tracing each layer within Spark workloads, including the performance and resource usage on the host and JVM, as well as Spark metrics and application-level logging. You can easily test this integration end-to-end by following the accompanying tutorial on Monitoring Azure Databricks with Azure Log Analytics and […]