Azure Pipelines offers a variety of extensions for integrating Terraform: An official one from Microsoft which has a number of limitations and has not been receiving any updates for years. A very popular one developed by my colleague Charles Zipp, which offers great functionality, but not all enterprises wish to install community extensions for Azure […]

Featured

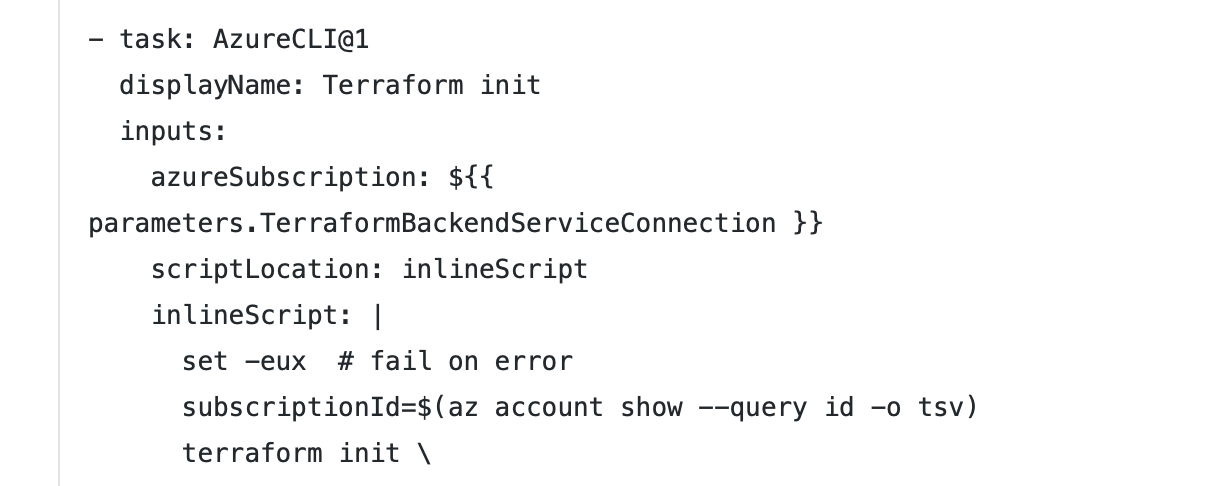

Managing Terraform outputs in Azure Pipelines

You can use Terraform as a single source of configuration for multiple pipelines. This enables you to centralize configuration across your project, such as your naming strategy for resources. When running terraform apply, the Terraform state (usually a blob in Azure Storage) contains the values of your defined Terraform outputs. In your output.tf: The Azure […]

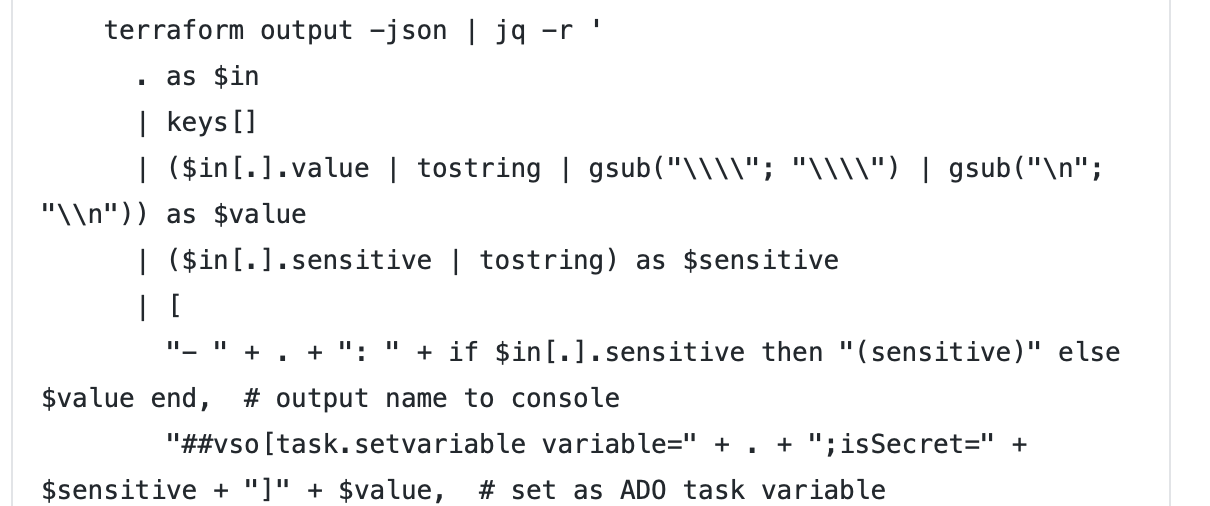

Data Lineage in Azure Databricks with Spline

The Spline open-source project can be used to automatically capture data lineage information from Spark jobs, and provide an interactive GUI to search and visualize data lineage information. We provide an Azure DevOps template project that automates the deployment of an end-to-end demo project in your environment, using Azure Databricks, Cosmos DB and Azure App […]

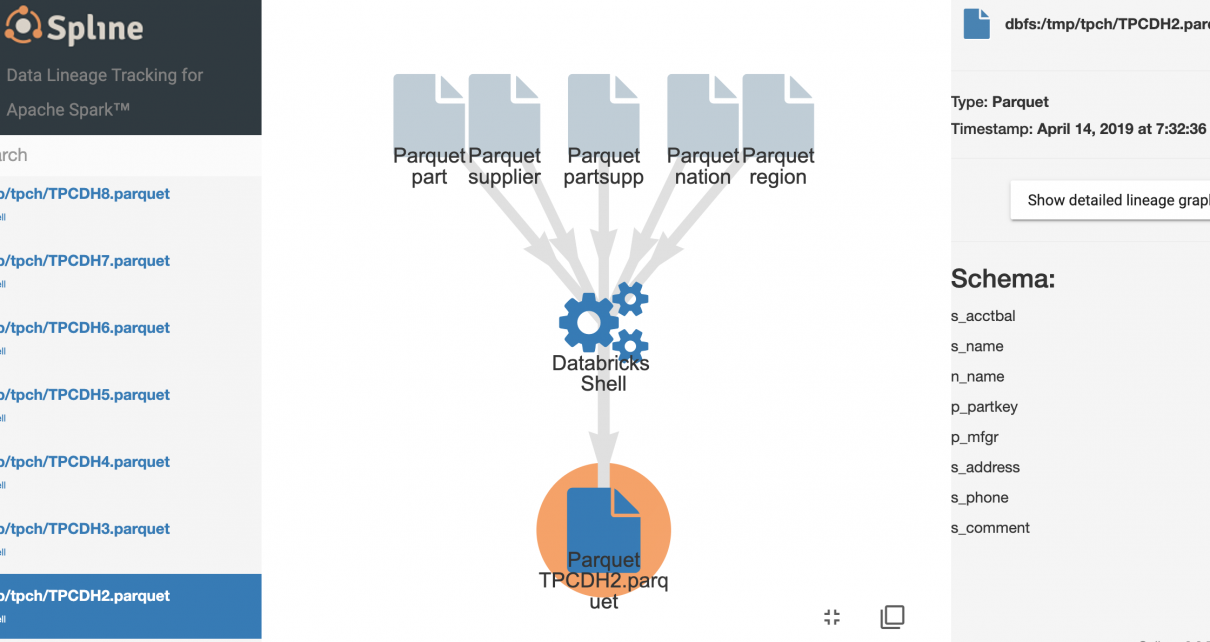

Monitoring and Logging in Azure Databricks with Azure Log Analytics and Grafana

Connecting Azure Databricks with Log Analytics allows monitoring and tracing each layer within Spark workloads, including the performance and resource usage on the host and JVM, as well as Spark metrics and application-level logging. You can easily test this integration end-to-end by following the accompanying tutorial on Monitoring Azure Databricks with Azure Log Analytics and […]

DevOps in Azure with Databricks and Data Factory

Building simple deployment pipelines to synchronize Databricks notebooks across environments is easy, and such a pipeline could fit the needs of small teams working on simple projects. Yet, a more sophisticated application includes other types of resources that need to be provisioned in concert and securely connected, such as Data Factory pipeline, storage accounts and […]

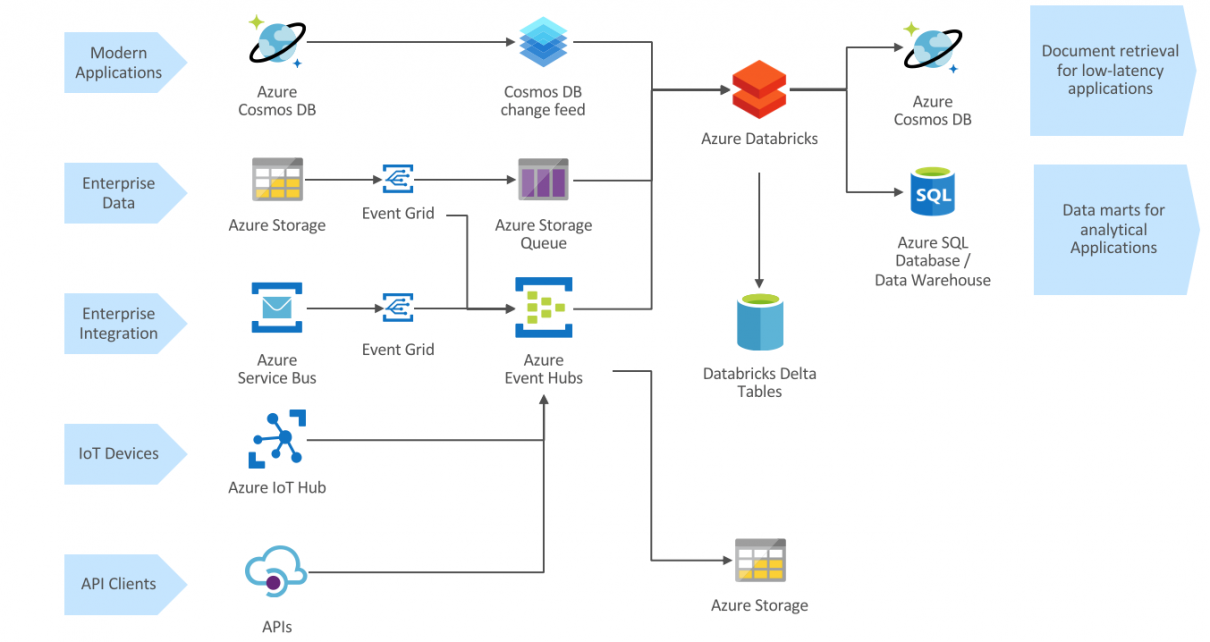

Event-based analytical data processing with Azure Databricks

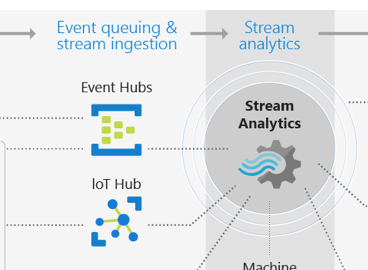

Cloud-native streaming architecture Overview Modern data analytics architectures should embrace the high flexibility required for today’s business environment, where the only certainty for every enterprise is that the ability to harness explosive volumes of data in real time is emerging as a a key source of competitive advantage. Fortunately, cloud platforms allow high scalability and […]

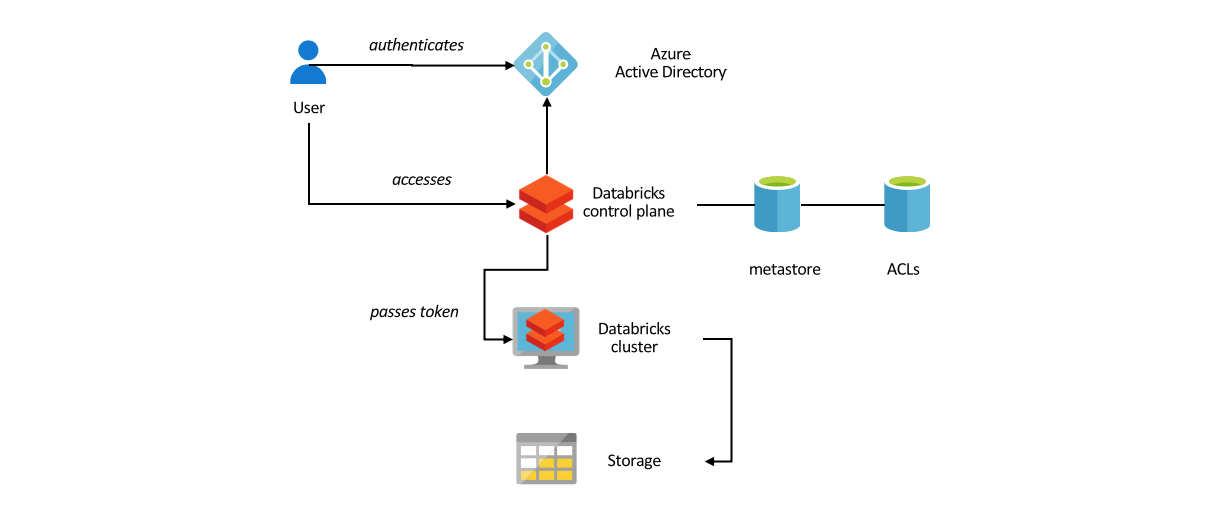

Data-level security in Azure Databricks

This is part 2 of our series on Databricks security, following Network Isolation for Azure Databricks. The simplest way to provide data level security in Azure Databricks is to use fixed account keys or service principals for accessing data in Blob storage or Data Lake Storage. This grants every user of Databricks cluster access to […]

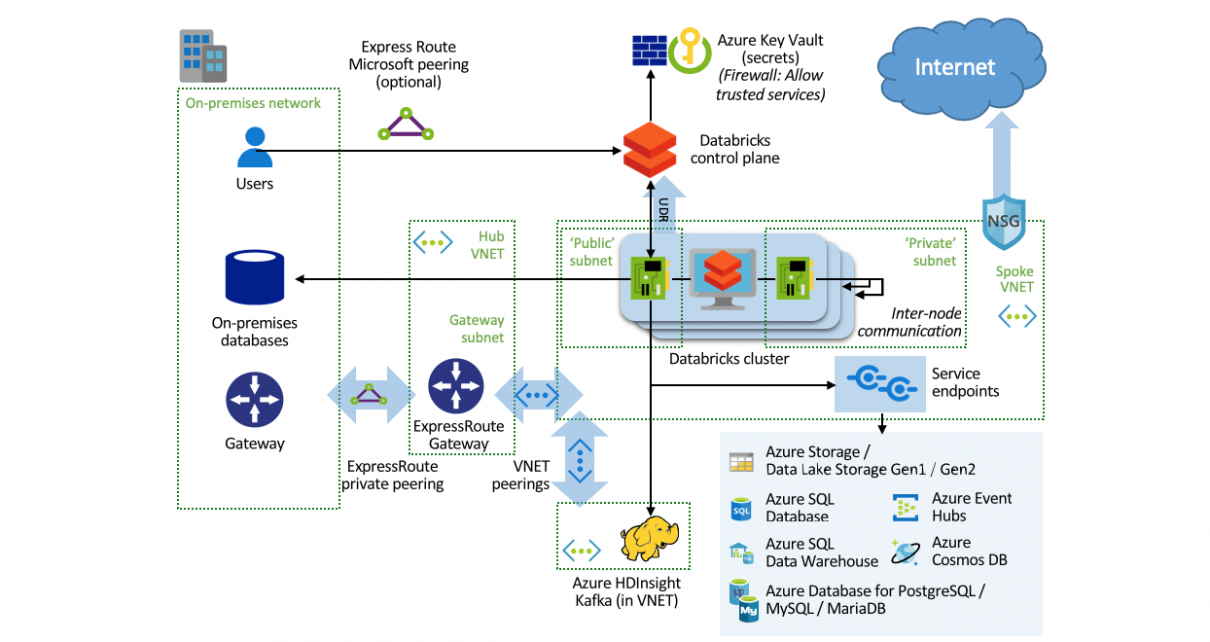

Network Isolation for Azure Databricks

For the highest level of security in an Azure Databricks deployment, clusters can be deployed in a custom Virtual Network. With the default setup, inbound traffic is locked down, but outbound traffic is unrestricted for ease of use. The network can be configured to restrict outbound traffic. For data science and exploratory environments, it is […]

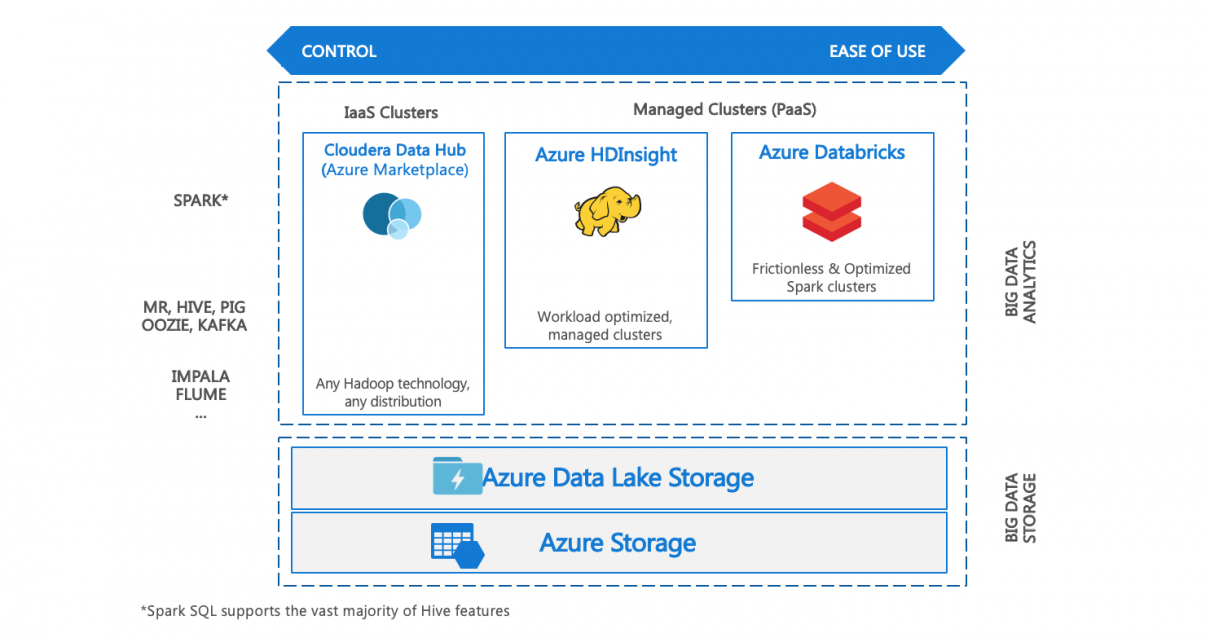

Choosing a Big Data Environment on Azure

I often get asked which Big Data computing environment should be chosen on Azure. The answer is heavily dependent on the workload, the legacy system (if any), and the skill set of the development and operation teams. Here is a (necessarily heavily simplified) overview of the main options and decision criteria I usually apply. Hadoop […]

Expert Systems for Predictive Maintenance

As a Cloud & AI Architect at Microsoft, my customers often identify field service as one of the first application areas for introducing Artificial Intelligence in their businesses. Especially with remote equipment, many companies are frustrated with the impact of downtime due to recurring causes that can be resolved quickly, but require a field service […]